Description

Remove duplicate rows based on keeping only unique values in selected columns

Example

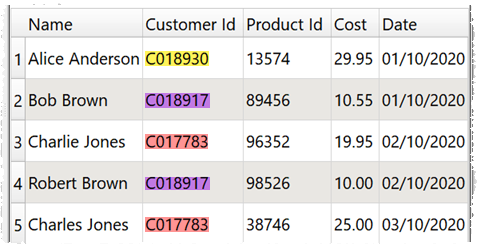

If you have a dataset of orders:

And you want to:

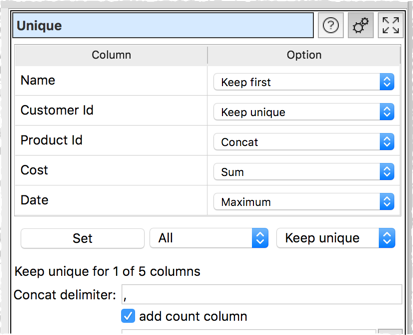

•keep one row per unique Customer Id

•keep the first listed Name for each Customer Id

•concatenate Product Ids for each Customer Id, delimited by a comma

•sum the Costs for each Customer Id

•keep the latest Date for each Customer Id

•add a Count column showing how many rows in the input correspond to each row in the output

You can set the following:

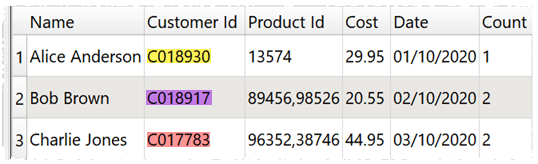

To get:

Inputs

One.

Options

•Set an Option for each column:

oOnly 1 row is kept where all the Keep unique columns have the same value.

oKeep first keeps first value in the current sort order.

oKeep last keeps the last value in the current sort order.

oSum sums any numerical values. Blank values are ignored.

oMaximum keeps the maximum numerical or date value.

oMinimum keeps the minimum numerical or date value.

oAverage takes the average (mean) of any numerical values. Blank values are ignored.

oConcat to concatenate values. Duplicate values are kept. All values are treated as text.

oConcat unique to concatenate values. Duplicate values are ignored. All values are treated as text.

•Use the Set button to quickly set the option value for multiple columns.

•Set Concat delimiter if you want to add a delimiter between Concat or Concat unique values

•Check add count column to add a column showing how many rows in the input dataset created each unique row.

Notes

•Rows are considered duplicates if they have exactly the same value in all the columns set to Keep unique. Comparisons are case and whitespace sensitive. You can use Case and Whitespace to change case and whitespace before deduping.

•If no columns are set to Keep unique the transform won't do anything.

•If you are using Keep first or Keep last the sort order is important. You can use Sort to change the sort order before deduping.

•The Dedupe transform is a less powerful (but simpler and faster) alternative to Unique.